We ran the numbers on a realistic 3-step support pipeline across three models that are commonly considered for production use. Here's what the math says.

The Workflow

A typical AI-powered support pipeline processes each incoming customer email through three steps:

No RAG, no tool use, no multi-turn conversation. Just three sequential LLM calls per customer email.

Token Estimates

Based on typical customer support emails (50–200 words incoming, structured outputs for steps 1–2, a 200-word response for step 3):

| Step | Input Tokens | Output Tokens |

|---|---|---|

| Classify Intent | ~200 | ~20 |

| Extract Details | ~350 | ~150 |

| Draft Response | ~500 | ~400 |

| Total per email | ~1,050 | ~570 |

Cost Per Email

Using current published API pricing (March 2026, per million tokens):

| Model | Input Price | Output Price | Cost / Email |

|---|---|---|---|

| GPT-4o (OpenAI) | $2.50 | $10.00 | $0.00833 |

| Claude Sonnet 4 (Anthropic) | $3.00 | $15.00 | $0.01170 |

| Grok 4.1 Fast (xAI) | $0.20 | $0.50 | $0.00050 |

Monthly Cost at Scale

Most support teams process anywhere from 50 to 500 emails per day through AI. Here's what that looks like monthly:

| Volume | GPT-4o | Claude Sonnet 4 | Grok 4.1 Fast |

|---|---|---|---|

| 50/day | $12.50/mo | $17.55/mo | $0.75/mo |

| 100/day | $25.00/mo | $35.10/mo | $1.50/mo |

| 200/day | $50.00/mo | $70.20/mo | $3.00/mo |

| 500/day | $125.00/mo | $175.50/mo | $7.50/mo |

| 1,000/day | $250.00/mo | $351.00/mo | $15.00/mo |

At 500 emails/day, you'd save $117.50/month switching from GPT-4o to Grok 4.1 Fast, or $168/month switching from Claude Sonnet.

But What About Quality?

Cost is only half the equation. If Grok 4.1 Fast produces worse classifications or awkward responses, the savings don't matter.

Here's where it gets interesting. Grok 4.1 Fast is a reasoning model — it scores 64 on LMSYS quality benchmarks, close to Grok 4's 65 and competitive with GPT-4o. For structured tasks like classification and entity extraction (Steps 1 and 2), the quality gap between economy and premium models is typically small — you're asking for a category label, not a creative essay.

Step 3 (response drafting) is where model quality matters most. The tone, empathy, and naturalness of the response directly affects customer satisfaction.

A hybrid pipeline at 500 emails/day:

| Step | Model | Monthly Cost |

|---|---|---|

| Classify | Grok 4.1 Fast | $0.06/mo |

| Extract | Grok 4.1 Fast | $0.38/mo |

| Respond | Claude Sonnet 4 | $105.30/mo |

| Total | $105.74/mo |

That's 15% cheaper than running everything through GPT-4o ($125/mo) while getting Claude's writing quality for the customer-facing output.

Try It Yourself

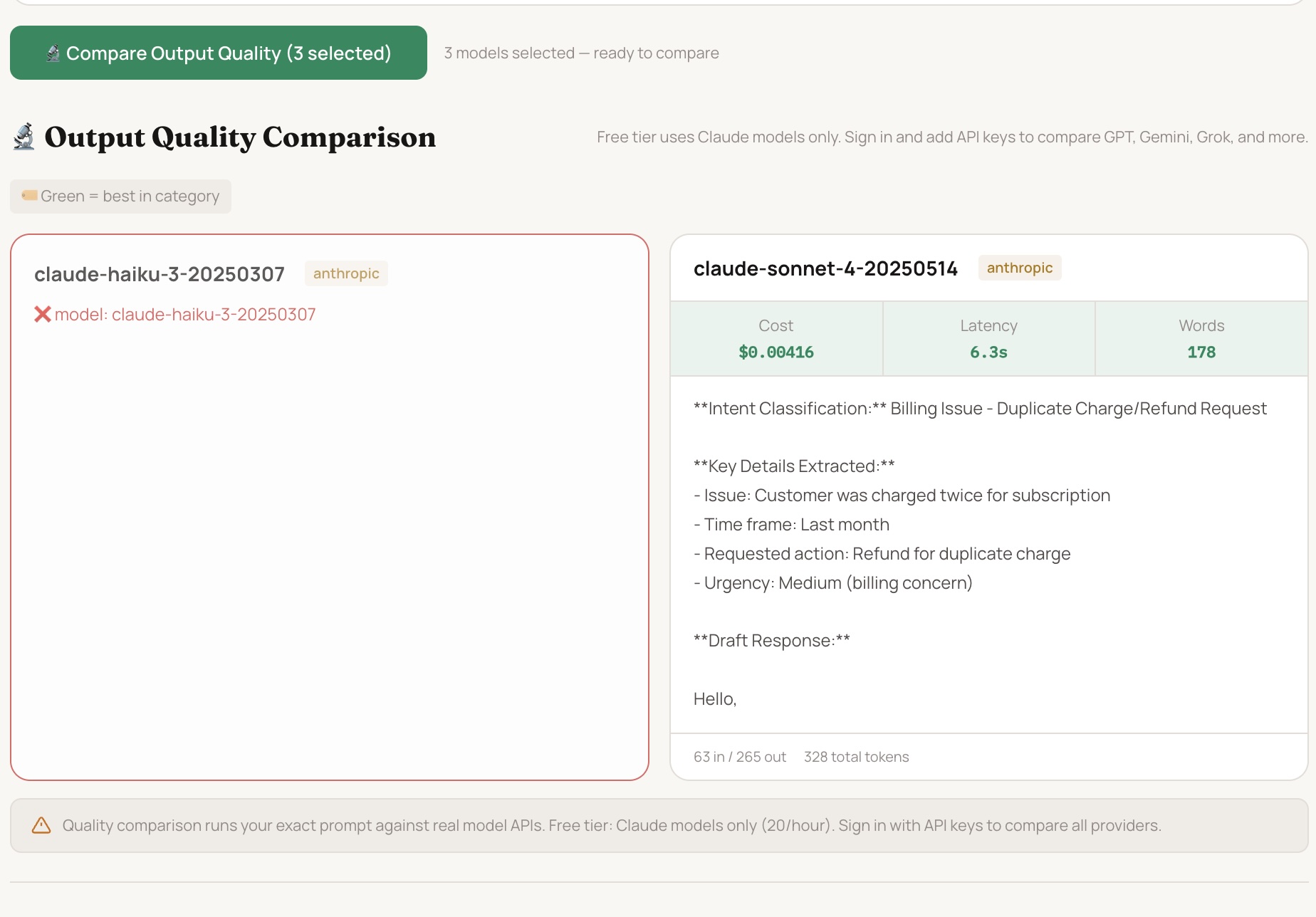

We built a free calculator that does this math for any prompt, across 35+ models. No signup required.

Open the Free LLM Cost Calculator →Paste your actual support prompt template. Set your expected output length and daily volume. See every model's cost, sorted cheapest first. You can even select models and compare their actual outputs side by side.

Key Takeaways

Don't use one model for everything. Classification and extraction don't need GPT-4o. Use economy models for structured outputs, premium models for customer-facing text.

Grok 4.1 Fast is the current price leader. At $0.20/$0.50 per million tokens, it's the cheapest model with near-frontier quality for most support tasks.

Claude Sonnet writes the best customer responses. If tone and empathy matter (they do in support), Sonnet is worth the premium — but only for the response step.

The difference is 10–20x, not 10–20%. Model selection isn't a marginal optimization. It's the difference between a $15/month AI bill and a $350/month AI bill for the same throughput.

Check your math before you commit. Pricing changes frequently. Use tokenlens.co/calculator to verify costs with current pricing before making model decisions.

All pricing data verified against official provider documentation as of March 2026. Estimates based on typical token usage patterns. Actual costs depend on prompt length, output variability, and provider pricing changes. TokenLens does not charge for LLM usage — costs are billed directly by your provider.

Built by PrArySoft. Try the free calculator at tokenlens.co/calculator.